Anthropic's Claude Agents Learn to 'Dream': A Leap in AI Autonomy and Memory

Anthropic's latest innovation, "dreaming" for Claude Managed Agents, marks a significant advancement in AI's ability to learn and adapt autonomously. This feature allows agents to review past interactions, identify key takeaways, and refine their strategies without constant human oversight. Coupled with expanded usage limits for Pro and Max users, these developments hint at a future where AI plays a more integrated and self-improving role in complex tasks, from customer service to scientific research.

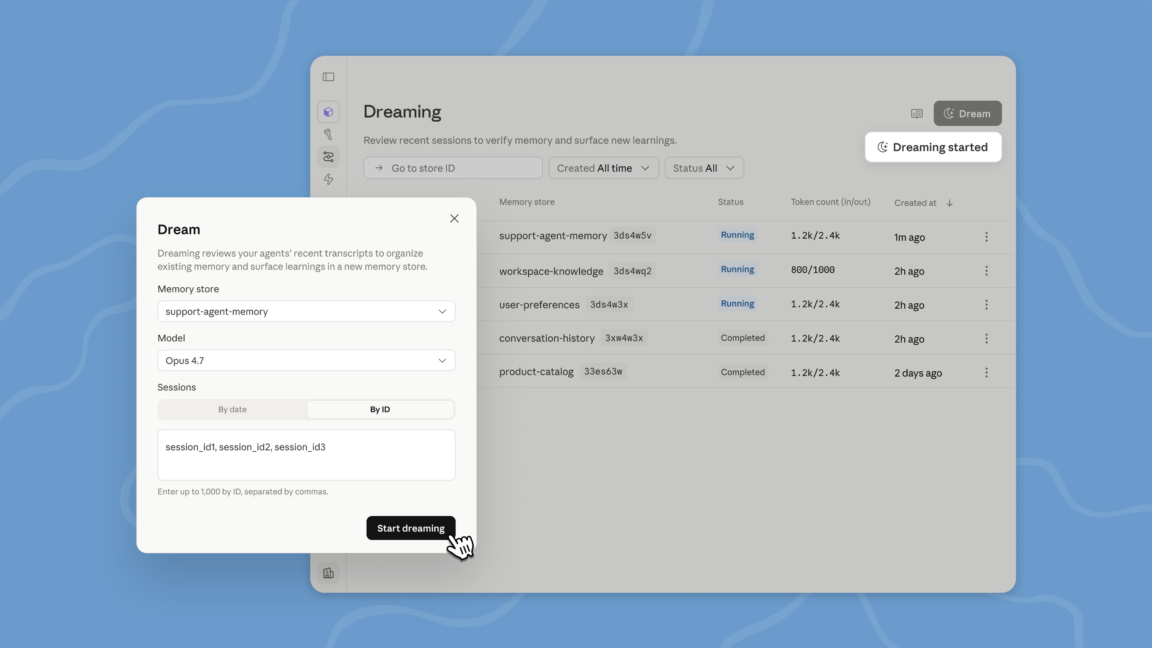

SAN FRANCISCO—In a move that blurs the lines between artificial intelligence and human-like cognitive processes, Anthropic, a leading AI safety and research company, recently unveiled a groundbreaking feature for its Claude Managed Agents: the ability to "dream." Announced at the Code with Claude developers’ conference, this isn't about REM cycles or subconscious narratives, but rather a sophisticated, self-reflective process designed to enhance AI autonomy and long-term memory. This development signals a pivotal moment in the evolution of AI, pushing beyond mere task execution to a realm of continuous learning and strategic refinement.

For decades, the concept of machines learning from their own experiences has been a cornerstone of AI research, often relegated to academic papers and futuristic visions. Now, with Claude's "dreaming" capability, this vision is beginning to materialize. The process involves agents autonomously reviewing recent events, identifying critical insights, and extracting actionable knowledge. This self-analysis allows them to improve their performance, adapt to new situations, and develop more robust strategies over time, all without explicit human programming for each new lesson. It's a significant step towards truly intelligent agents that can evolve their understanding of the world and their assigned tasks.

The Mechanics of AI 'Dreaming': Self-Reflection in Action

At its core, Claude's "dreaming" is a sophisticated form of meta-learning and self-improvement. Imagine an AI agent tasked with managing complex customer support inquiries. Traditionally, such an agent would operate based on its initial training data and pre-defined rules. If it encountered a novel problem, it might struggle or require human intervention to update its knowledge base. With "dreaming," the agent can, during periods of low activity or scheduled downtime, review its past interactions. It might identify patterns in customer complaints, discover more efficient ways to resolve common issues, or even recognize nuances in language that previously led to misunderstandings.

This retrospective analysis isn't just about logging data; it's about synthesizing information into actionable insights. The agent might generate new internal rules, update its understanding of specific topics, or even propose changes to its own operational parameters. This continuous feedback loop allows the agent to become more effective and efficient without constant human oversight. It's akin to a human employee reflecting on their workday, learning from mistakes, and planning how to improve tomorrow. This capability is particularly powerful for tasks requiring long-term engagement and adaptation, such as project management, research assistance, or complex data analysis.

Historical Context: From Rule-Based Systems to Autonomous Learning

The journey to AI "dreaming" has been a long and arduous one, marked by several paradigm shifts in artificial intelligence. Early AI systems, prevalent in the mid-20th century, were primarily rule-based expert systems. These systems operated on explicit, hand-coded rules and lacked any inherent ability to learn or adapt beyond their initial programming. Think of early chess-playing programs that relied on vast databases of openings and endgames, but couldn't truly "understand" the game in a human sense.

The advent of machine learning in the late 20th and early 21st centuries, particularly with the rise of neural networks and deep learning, revolutionized AI. These models could learn directly from data, identifying complex patterns and making predictions without explicit programming for every scenario. However, even these advanced systems often required massive datasets and extensive human supervision during the training phase. Their learning was largely confined to the training environment, and adapting to entirely new, unforeseen situations remained a challenge.

Claude's "dreaming" capability represents a significant leap from these earlier models. It moves beyond passive learning from external data to active, introspective learning from its own operational experiences. This self-directed improvement is a hallmark of more advanced cognitive systems and brings AI closer to the long-held goal of Artificial General Intelligence (AGI), where machines can perform any intellectual task that a human can.

Implications for Business, Research, and Everyday Life

The introduction of "dreaming" agents has profound implications across various sectors. For businesses, it promises a new era of hyper-efficient automation. Imagine customer service agents that continuously improve their problem-solving skills, or data analysts that refine their analytical approaches based on past insights. This could lead to:

* Enhanced Customer Experience: Faster, more accurate, and more personalized support. * Increased Operational Efficiency: Automating complex workflows with less human intervention. * Accelerated Research and Development: AI agents assisting scientists by autonomously refining experimental parameters or identifying novel research avenues. * Improved Decision-Making: Agents providing more nuanced and context-aware recommendations.

Furthermore, Anthropic also announced a doubling of 5-hour usage limits for Pro and Max users of Claude Code. This seemingly minor update is, in fact, a crucial enabler for the "dreaming" feature. Longer continuous usage windows allow agents more time to engage in these self-reflective processes, process larger volumes of data, and execute more complex, multi-step tasks without interruption. It signifies Anthropic's commitment to empowering users with more robust and capable AI tools, moving beyond simple conversational interfaces to truly autonomous assistants.

The Road Ahead: Challenges and Ethical Considerations

While the prospect of self-improving AI is exciting, it also raises important questions and challenges. The ethical implications of increasingly autonomous AI agents require careful consideration. How do we ensure that agents' self-generated improvements align with human values and safety protocols? What mechanisms are in place to prevent unintended consequences or the propagation of biases learned from real-world interactions?

Anthropic, known for its focus on AI safety and constitutional AI, is likely addressing these concerns through its design principles. However, as AI capabilities advance, the need for robust governance frameworks, transparency, and human oversight becomes even more critical. The "dreaming" process itself could be designed to be auditable, allowing developers to understand why an agent made certain improvements or decisions.

Looking ahead, we can expect further advancements in AI's ability to learn, adapt, and even innovate autonomously. The "dreaming" feature is just one step on this path. Future iterations might involve agents collaborating with each other, sharing learned insights, or even developing entirely new problem-solving methodologies. The vision of AI as a truly intelligent partner, capable of continuous growth and self-enhancement, is rapidly transitioning from science fiction to scientific reality. As these technologies mature, they will undoubtedly reshape industries, redefine human-computer interaction, and challenge our very understanding of intelligence itself.

Stay Informed

Get the world's most important stories delivered to your inbox.

No spam, unsubscribe anytime.

Comments

No comments yet. Be the first to share your thoughts!