Meta AI's 'Incognito Chat' Challenges Privacy Norms in the AI Era

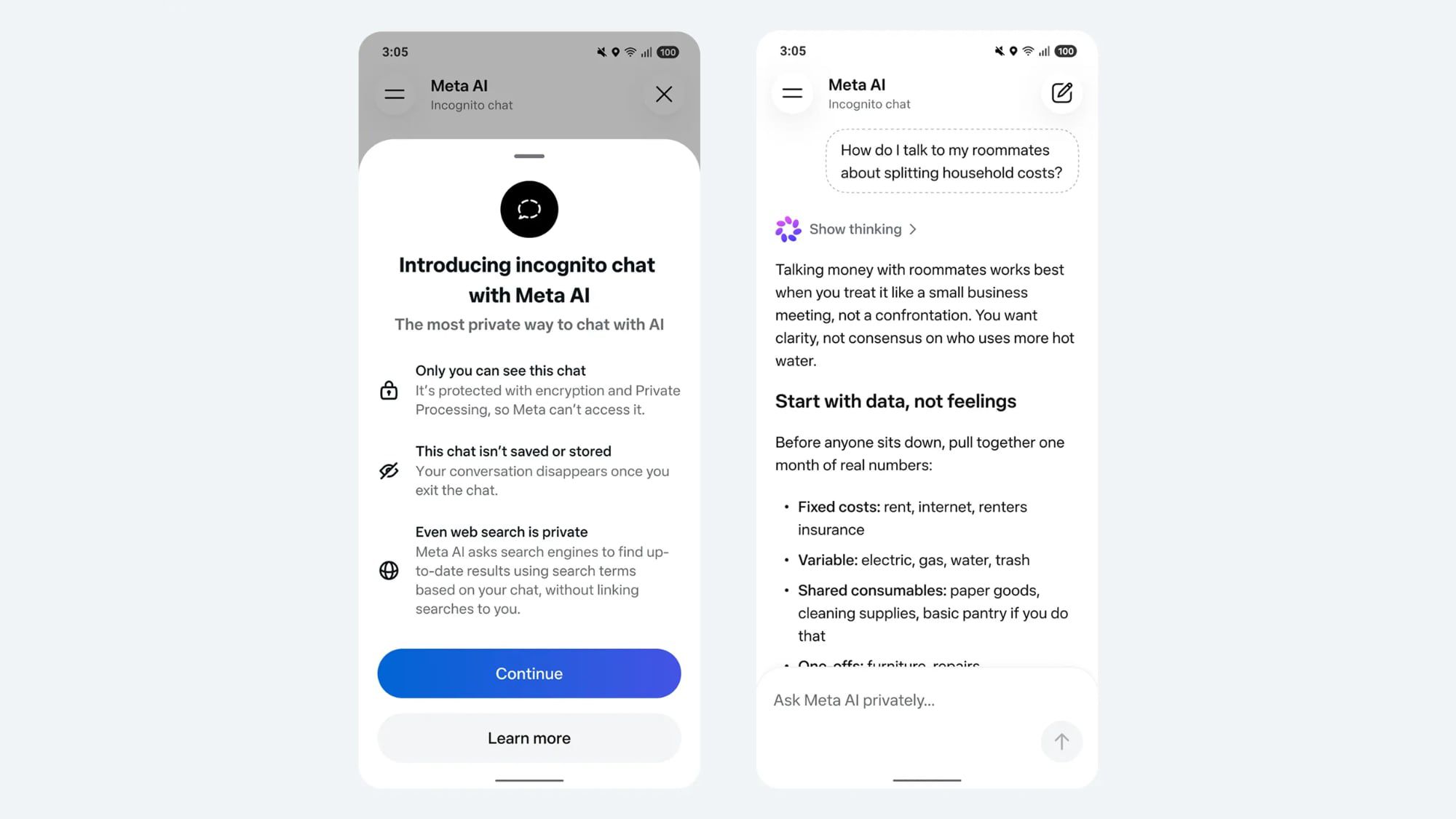

Meta has introduced an 'incognito chat' feature for its Meta AI app and WhatsApp integration, promising a completely private way to interact with artificial intelligence. CEO Mark Zuckerberg asserts this is the first major AI product where no conversation logs are stored, likening it to end-to-end encryption. This move directly addresses growing user concerns about data privacy in AI interactions, setting a new standard in the competitive landscape.

In an era increasingly defined by the pervasive integration of artificial intelligence into our daily lives, the question of data privacy has emerged as a paramount concern. From personal assistants to sophisticated generative models, the convenience offered by AI often comes with an implicit trade-off: the sharing of personal data. However, a recent announcement from Meta Platforms, spearheaded by CEO Mark Zuckerberg, signals a significant shift in this paradigm. The introduction of an 'incognito chat' option for Meta AI, available on its dedicated app and integrated within WhatsApp, promises a "completely private way to interact with AI," a bold claim that could redefine user expectations for AI privacy.

The Dawn of Private AI Interaction

Zuckerberg's declaration is not merely a marketing slogan; it represents a fundamental architectural decision. He emphasized that Meta AI's incognito mode is the "first major AI product where there is no log of conversations stored on servers." This is a critical distinction in a field where most AI services, including those from competitors, routinely store user interactions to improve models, personalize experiences, or for compliance and safety checks. The analogy to end-to-end encryption, a gold standard in secure messaging, underscores the profound commitment to user privacy. Zuckerberg explicitly stated that "no one will be able to read the AI conversations, not even Meta or WhatsApp." This level of assurance aims to foster trust at a time when skepticism about corporate data handling is at an all-time high.

The implications of this move are far-reaching. For users, it means the freedom to engage with an AI assistant on sensitive topics—be it health, finance, personal dilemmas, or creative brainstorming—without the lingering fear that their digital footprints will be analyzed, stored, or potentially exploited. This could unlock new use cases for AI, particularly in sectors requiring stringent confidentiality, such as legal or medical consultations, albeit with the current limitations of consumer-grade AI. For Meta, it’s a strategic play to differentiate its AI offerings in a crowded market, positioning itself as a champion of user privacy amidst a backdrop of increasing regulatory scrutiny and public apprehension.

A Response to a Growing Privacy Crisis

Meta's pivot towards enhanced privacy for its AI is not occurring in a vacuum. The broader technology landscape is grappling with a burgeoning AI privacy crisis. The very nature of large language models (LLMs) often involves training on vast datasets, including publicly available information that may contain personal data. Furthermore, user interactions with these models are frequently used to further refine and improve them, leading to concerns about the inadvertent exposure or misuse of sensitive information.

Recent events have only exacerbated these fears. OpenAI, a leading AI developer, has faced multiple lawsuits over stored chat logs, with plaintiffs alleging that the company's practices violate privacy rights by collecting and retaining user conversations without explicit, informed consent. These legal challenges highlight the urgent need for robust privacy safeguards in AI development and deployment. Similarly, other major tech players have encountered criticism for their data retention policies, with some even facing temporary bans or restrictions in certain regions due to privacy concerns.

In this context, Meta's incognito chat feature serves as a direct counter-narrative. It's a proactive measure designed to assuage user anxieties and perhaps preempt future legal challenges. By offering an opt-in private mode, Meta acknowledges the legitimate concerns of its users and attempts to provide a solution that aligns with evolving privacy expectations. This could set a new benchmark for competitors, forcing them to re-evaluate their own data handling practices and potentially inspiring a broader industry shift towards more privacy-centric AI models.

Technical Underpinnings and User Control

While the concept of "incognito" mode is familiar from web browsers, its application to AI interactions presents unique technical challenges. The core promise—no server-side logging—implies a sophisticated architecture designed to process user input and generate responses without retaining conversational history. This likely involves ephemeral processing where data is handled in real-time and immediately discarded, or highly localized processing on the user's device where feasible. The exact technical details of how Meta achieves this while maintaining the AI's functionality and performance will be crucial for independent verification and building long-term trust.

Crucially, the feature emphasizes user control. Users actively choose to initiate an incognito chat, implying that standard Meta AI interactions might still involve some form of data logging, albeit with Meta's existing privacy policies in place. This distinction is vital for transparency. It empowers users to make informed decisions about when and how they want their AI interactions to be handled, offering a tiered approach to privacy that caters to different levels of comfort and sensitivity.

The introduction of incognito chat also raises questions about the future development of Meta AI. If conversations are not logged, how will the model learn and improve from user feedback in this mode? This suggests a potential trade-off between privacy and model refinement, or it may indicate that Meta is exploring alternative, privacy-preserving methods for AI training and optimization, such as federated learning or differential privacy, which allow models to learn from decentralized data without directly accessing individual user information.

The Broader Impact: A New Standard for AI Privacy?

Meta's move has the potential to ripple across the entire AI industry. As consumers become more aware of their digital rights and the value of their data, features like incognito chat could become a competitive differentiator and eventually a standard expectation. Companies that fail to offer comparable privacy safeguards might find themselves at a disadvantage, struggling to attract and retain users who prioritize confidentiality.

Moreover, this development could influence regulatory bodies worldwide. Governments and privacy advocates are actively working to establish frameworks for AI governance, and Meta's initiative provides a tangible example of how privacy-by-design principles can be implemented in practical AI applications. It could serve as a blueprint or at least a point of reference for future regulations, encouraging a more privacy-conscious approach to AI development across the board.

However, the success of Meta AI's incognito chat will ultimately depend on several factors: the robustness of its technical implementation, the clarity of its communication to users, and Meta's ongoing commitment to upholding its privacy promises. In a world where trust in tech giants is often tenuous, this feature represents a significant step towards rebuilding that trust, offering users a glimpse into a future where the power of AI can be harnessed without sacrificing fundamental privacy rights. As AI continues its inexorable march into every facet of society, the battle for privacy will undoubtedly intensify, and Meta has just fired a significant shot in that evolving conflict.

Stay Informed

Get the world's most important stories delivered to your inbox.

No spam, unsubscribe anytime.

Comments

No comments yet. Be the first to share your thoughts!